An evaluation in isolation tells you your current score. What you actually want to know is whether the score moved. That’s what groups, the progression chart, and side-by-side comparison are for.Documentation Index

Fetch the complete documentation index at: https://laminar.sh/docs/llms.txt

Use this file to discover all available pages before exploring further.

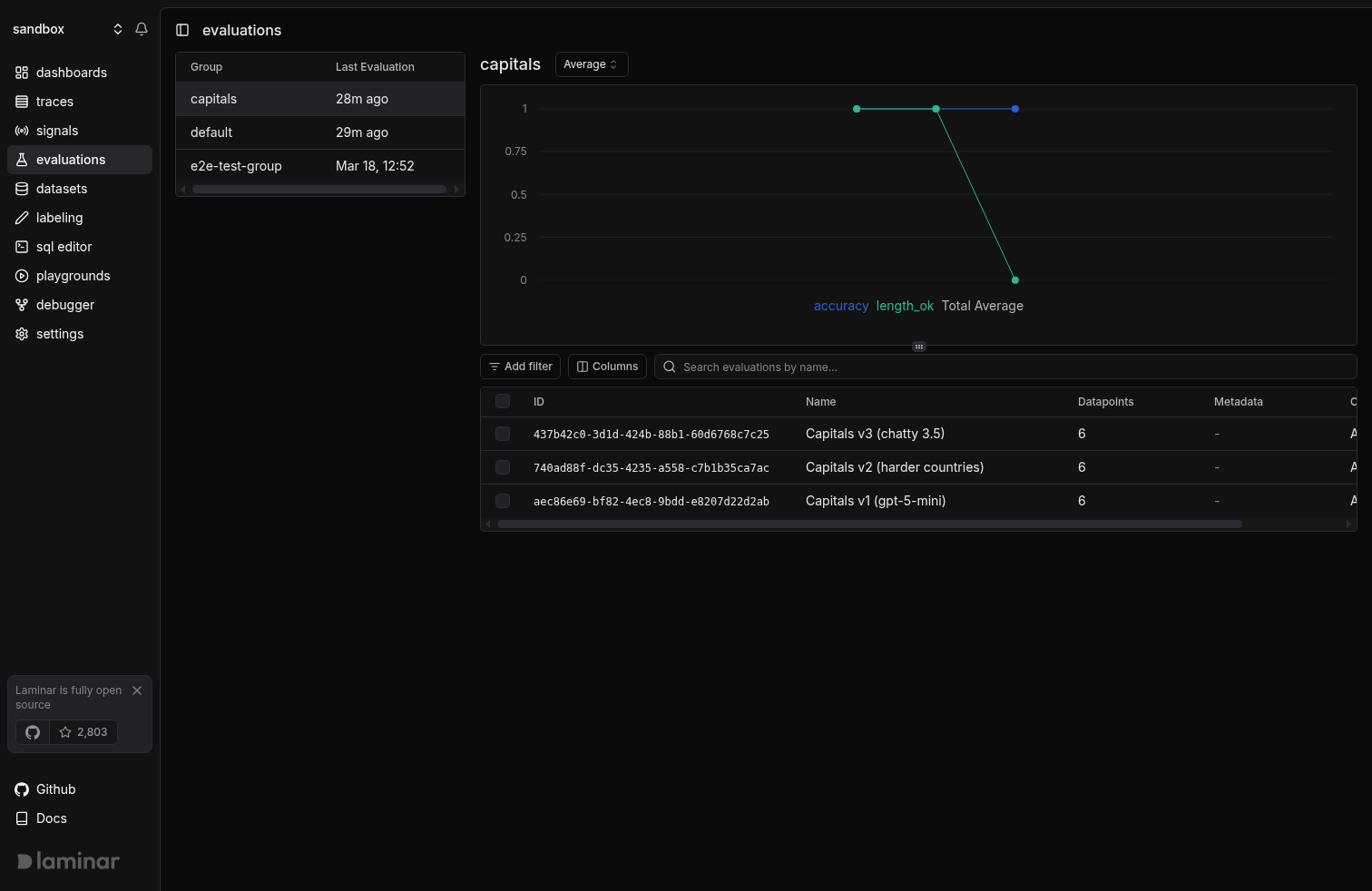

Group runs to compare them

PassgroupName / group_name to evaluate(). Every run with the same group name lands together on the evaluations page.

- TypeScript

- Python

capitals group whether you’re swapping models, prompts, or datasets. Changing the group name means Laminar can’t chart the runs together.

Read the progression chart

The evaluations page shows every run in a group, newest first, with the group’s average score for each dimension plotted across the top.

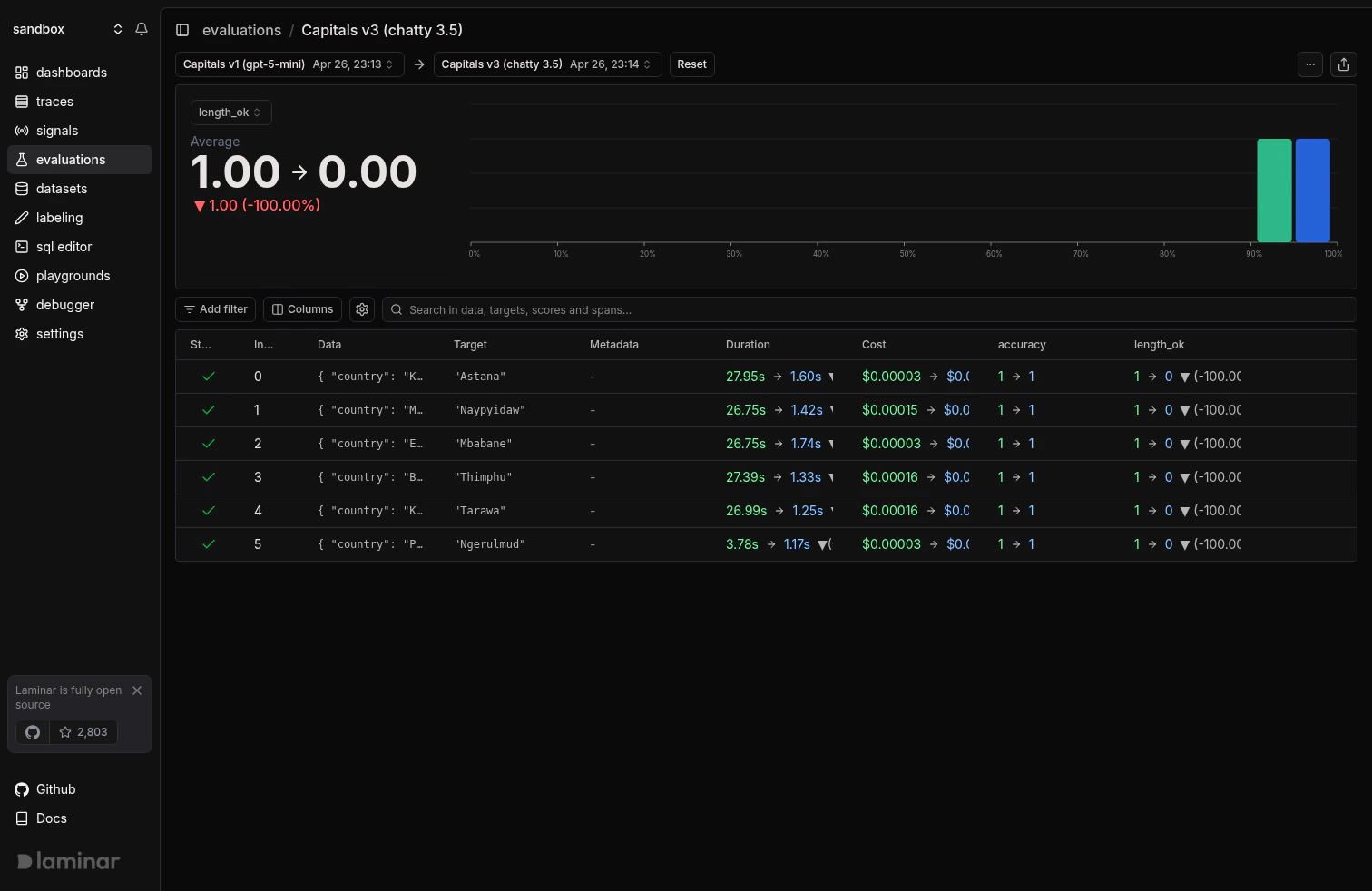

length_ok fell from 1.0 to 0.0 on the most recent run because the prompt was changed to ask for a one-sentence fun fact instead of a one-word answer. Every output now exceeds the 50-character limit the evaluator checks for.

Side-by-side comparison

Click any run to open its detail page. Use the Select compared evaluation dropdown to pick a second run from the same group. Laminar renders both score distributions on top of each other and shows per-row deltas.

0.00 → 1.00) tells you the direction. The per-row deltas below tell you exactly which datapoints moved.

Filter by group in the list

The evaluations list at/evaluations groups runs by default. Click a group in the sidebar, or visit /evaluations?groupId=<group-name> directly. The progression chart and run list scope to the selected group.

Export comparisons

Hit Download on the evaluation detail page to export the datapoints, scores, and executor outputs as CSV. Useful for external analysis or for building regression test suites out of the rows that failed. For anything beyond CSV, query the underlying table with SQL:Next steps

Datasets

Keep the dataset constant across runs so comparisons are apples-to-apples.

SQL editor

Query

evaluation_datapoints for bespoke comparisons and dashboards.Manual API

The lower-level API when

evaluate() is too opinionated.SDK reference

Full parameters for

evaluate, LaminarDataset, and EvaluationDataset.