Overview

OpenCode is an open-source AI coding agent. Its@opencode-ai/sdk package lets you call OpenCode programmatically — spin up a server, drive a session, and build products on top of the agent instead of using the TUI. Laminar makes those runs observable.

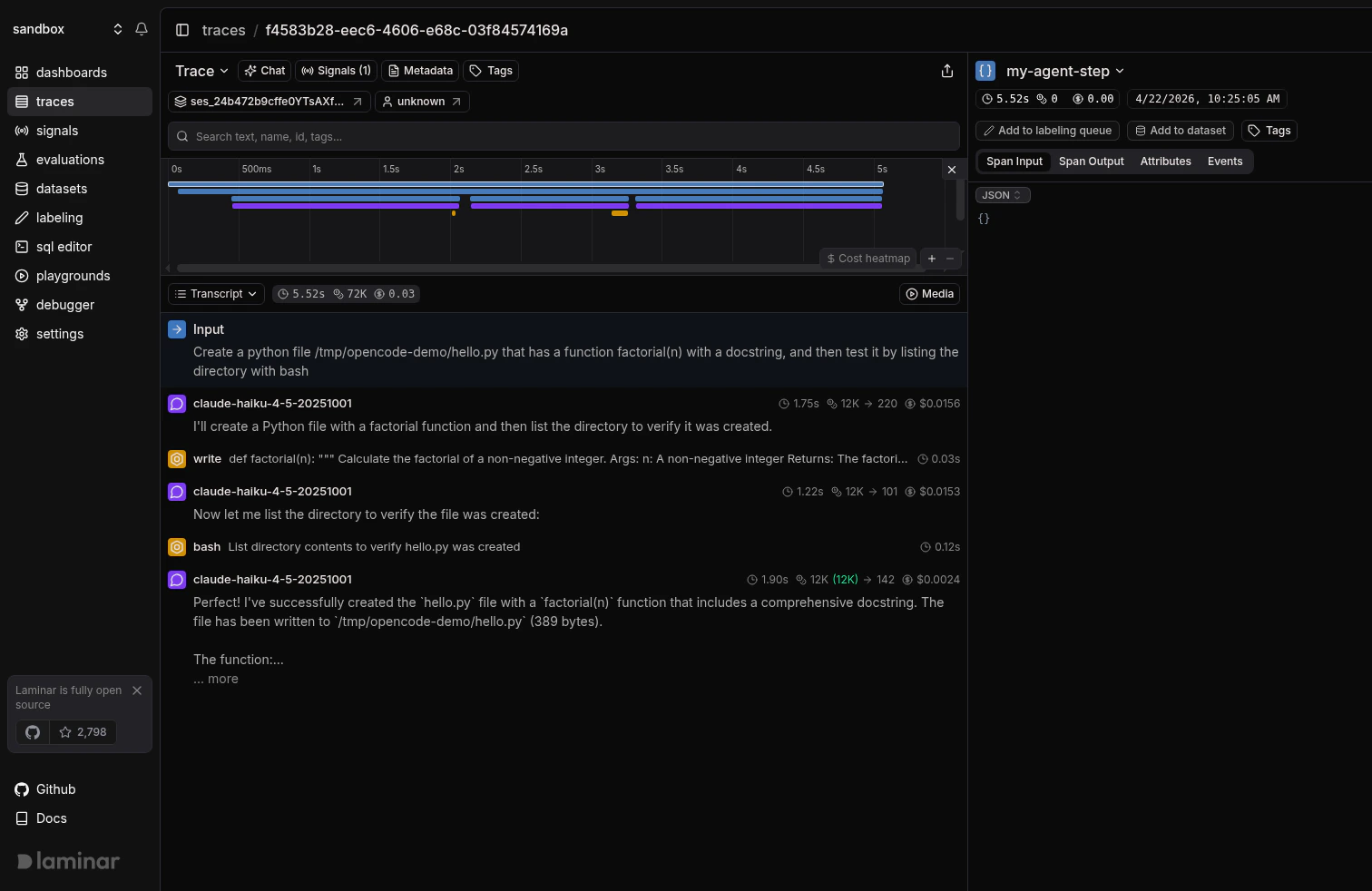

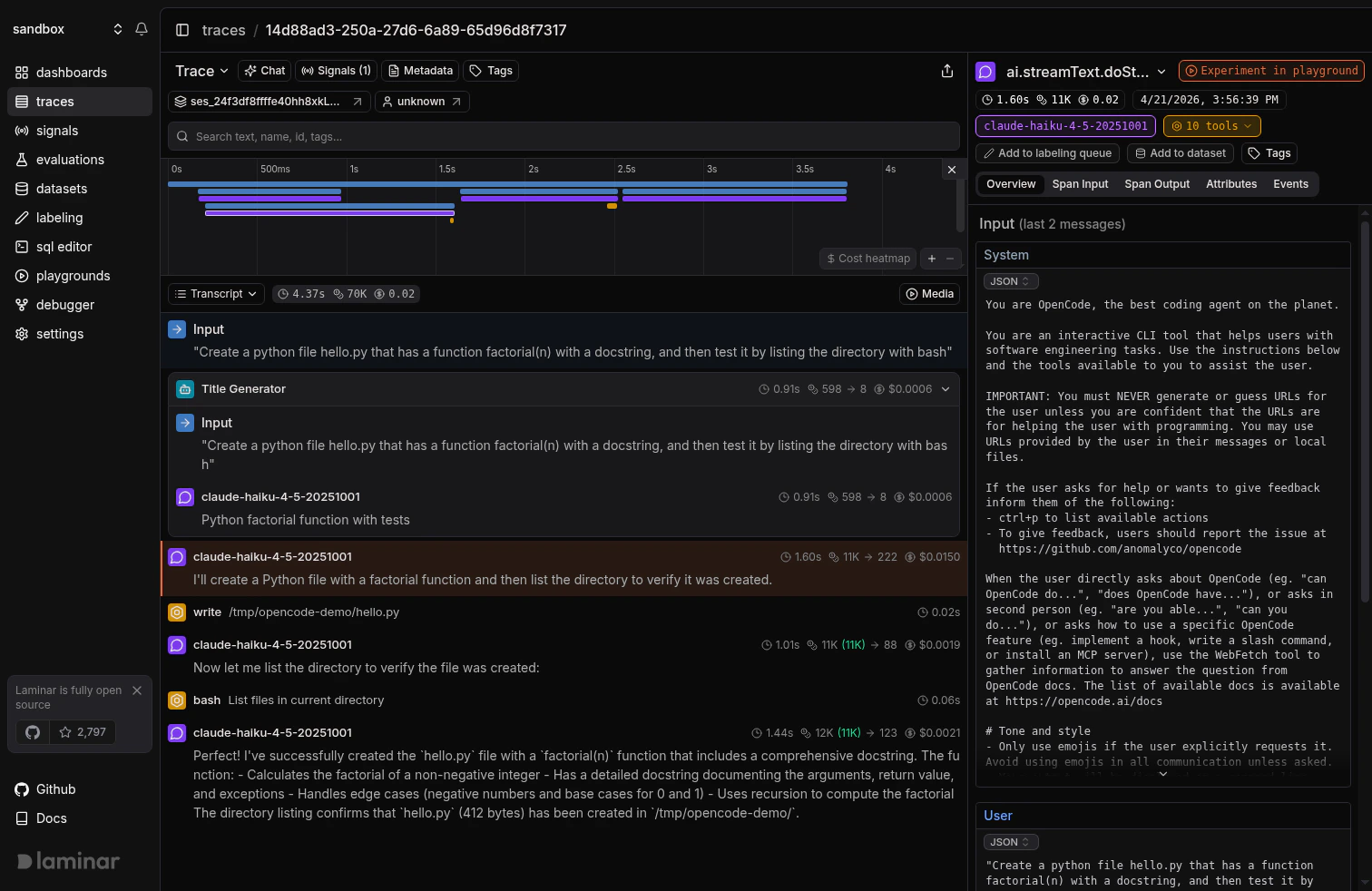

What Laminar captures:

- Every conversation turn, one trace per turn.

- LLM prompts, responses, token counts, latency, and cost.

- Tool calls (

bash,read,write,edit, custom tools) with arguments and results. - Sub-agents (the Title Generator, task runners) as nested spans.

- When you wrap

client.session.promptinobserve, the server-side turn nests under your caller span, so the entire call stack lands in a single trace.

Calling OpenCode from TypeScript

Laminar ships two pieces that cooperate:@lmnr-ai/lmnrpatches@opencode-ai/sdkon the caller side. When you wrap aclient.session.promptcall inobserve, the instrumentation injects the current span context into the request.@lmnr-ai/opencode-pluginruns inside the OpenCode server, picks up that context, and emits the turn, LLM, and tool-call spans as children of your caller span.

observe block into the server’s turn span and all the way down to each LLM call.

Add the Laminar plugin to opencode.json

The caller-side instrumentation only injects context; the server-side plugin is what actually emits the turn and tool spans. Create (or edit) The JSON key is

opencode.json next to your code:opencode.json

plugin, not plugins. OpenCode will reject the file otherwise.OpenCode reads config from multiple locations. The closest one wins: a file in the current project overrides

~/.config/opencode/opencode.json. If you want tracing on by default everywhere, put the plugin block in the global file. See OpenCode’s plugin docs for the full layering rules.Set LMNR_PROJECT_API_KEY

The plugin reads To get the project API key, go to the Laminar dashboard, click the project settings,

and generate a project API key. This is available both in the cloud and in the self-hosted version of Laminar.Specify the key at

LMNR_PROJECT_API_KEY from the environment of whatever process starts the OpenCode server. When you use opencode.createOpencode(), that’s the same process that runs your TypeScript code, so exporting it in your shell is enough:Laminar initialization. If not specified,

Laminar will look for the key in the LMNR_PROJECT_API_KEY environment variable.Initialize Laminar with the opencode module

If you import

@opencode-ai/sdk before Laminar.initialize() (common when the project has "type": "module" in package.json), your local reference won’t be auto-wrapped. Pass the module via instrumentModules as shown above; the instrumentation attaches to the SDK’s Session class and every new OpencodeClient picks it up.Trace structure

Because the caller-side instrumentation hands the span context to the server-side plugin, the trace comes back as a single tree: yourobserve span is the root, the server’s turn span is its child, and every session.llm, model call, and tool call hangs off turn. There is no separate trace for the server — everything the OpenCode process does on this turn shows up nested under the TypeScript code that kicked it off.

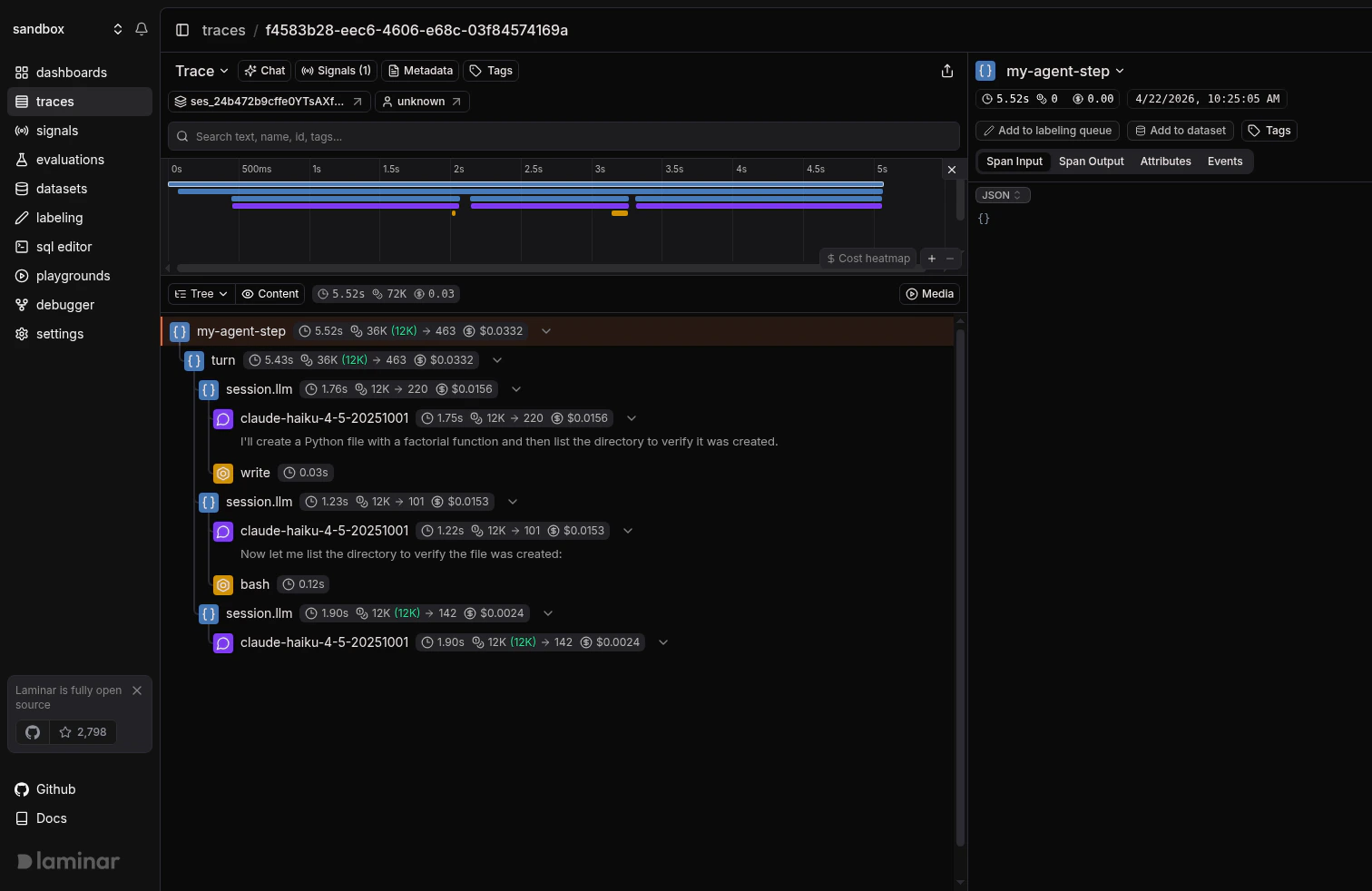

The tree view makes that nesting obvious:

Using the OpenCode CLI

The plugin works the same way on a standaloneopencode CLI run: add it to opencode.json, export LMNR_PROJECT_API_KEY, and every conversation turn gets traced. There’s no TypeScript caller to be the parent span, so each turn becomes its own top-level trace.

Install the OpenCode CLI

Follow OpenCode’s install guide. You do not need to install the plugin package yourself; OpenCode resolves it from npm on first run.

Configure the plugin and key

Use the same

opencode.json plugin entry as the SDK section above, and export LMNR_PROJECT_API_KEY in the shell you launch opencode from. You can also put the key in a .env file in the project directory; OpenCode loads it automatically.

Track outcomes with Signals

Traces answer what happened on this turn. Signals answer the cross-trace question: how often does the agent edit a file it wasn’t asked to, when does the bash tool run a destructive command, which turns burn tokens without touching a file. A Signal pairs a plain-language prompt with a JSON output schema. Laminar runs it live on new traces (Triggers) or backfills it across history (Jobs) and records a structured event every time it matches. From there you query, cluster, and alert on events across every trace.Every new project ships with a Failure Detector Signal that categorizes issues on any trace over 1000 tokens. Open it from the Signals sidebar to see events as soon as your OpenCode traces arrive.

Query across traces

- SQL editor for ad-hoc queries across traces, spans, signals, and evals.

- SQL API for programmatic access from scripts and pipelines.

- CLI (

lmnr-cli sql query) for terminal-driven queries and piping JSON into shell tools or coding agents. - MCP server to query Laminar directly from OpenCode, Claude Code, Cursor, or Codex.

Troubleshooting

I don't see any traces in Laminar

I don't see any traces in Laminar

LMNR_PROJECT_API_KEYmust be set in the environment of the process that launches the OpenCode server, not just in the process that creates the OpenCode client. The plugin runs on the server side and reads the key there.- Check the OpenCode startup log for

Laminar tracing initialized → .... If you seeLMNR_PROJECT_API_KEY not set, skipping plugin initialization, the plugin loaded but had no key. - Confirm

opencode.jsonuses the key"plugin"(singular)."plugins"silently does nothing.

Traces from my TypeScript caller aren't linked to the server turn

Traces from my TypeScript caller aren't linked to the server turn

- Make sure both sides are on a current

@lmnr-ai/lmnr(install with@latest). The caller-side instrumentation and the plugin’s context extraction need to match up. - The server process also needs the plugin in

opencode.json. Without it, the caller-side span lands in Laminar but the server spans land in their own trace. - Wrap the

client.session.promptcall inobserve(or another active span). The SDK instrumentation only injects the context if there is one.

Self-hosting Laminar

Self-hosting Laminar

Export

LMNR_BASE_URL and LMNR_GRPC_PORT in the same environment as LMNR_PROJECT_API_KEY. For a local OSS instance, that’s LMNR_BASE_URL=http://localhost and LMNR_GRPC_PORT=8001. The plugin passes these through when it initializes Laminar.What’s next

Viewing traces

Read the transcript view, filter, and search across traces.

Signals

Detect behaviors and failures across every OpenCode turn, then query, cluster, and alert on them.

SQL editor and MCP server

Query traces programmatically from the UI, API, or your IDE.

Tracing structure

Sessions, metadata, and tags for deeper control.

Claude Agent SDK

Running OpenCode alongside Claude Agent SDK? Trace both here.